Resources

ChatGPT: Friend or Foe?

By Rodman Ramezanian - Global Cloud Threat Lead, Skyhigh Security

February 24, 2023 5 Minute Read

Unless you’ve been living under a rock, you’d surely have read or heard about ChatGPT by now. This AI-driven chatbot has piqued the interests of millions of active users per day, fueling all sorts of different ideas and use cases – from writing essays for school projects, to composing technical business papers, even paying tribute to late rappers like Tupac Shakur fictitiously rhyming about cybersecurity (highly recommended for a bit of fun), and everything in between.

It does seem that the possibilities with ChatGPT are limitless.

The use of Artificial Intelligence (AI) in cybersecurity isn’t exactly novel; security products have leveraged AI and Machine Learning (ML) for years now. For instance, AI/ML is commonly used nowadays to analyze traffic and data in real-time, ultimately to identify anomalous activities before they eventuate into security breaches. Innovations like these are extremely welcome when they can offer security teams earlier insights into potential indicators of compromise.

Implications

In spite of the benefits that AI and ML have brought to the realm of cybersecurity, ChatGPT represents a new level of potential risk. While many AI-guided tools have focused on detecting and preventing suspicious activity, ChatGPT has the ability to actively assist threat actors in their efforts to attack systems.

I’ll demonstrate this with an example. ChatGPT wouldn’t actually write a phishing email with everything I’d hypothetically need to launch an attack (see below – there seems to be a rudimentary ethical filter).

It would, however, write an innocuous email template regarding a fictitious work event that could be weaponized with very little effort for phishing purposes (below)

Now, one could argue that malicious actors already automate attacks and leverage some forms of AI/ML models to achieve their own twisted efficiencies. Following that same train of thought, however, ChatGPT could be seen as somewhat of a gift to them. It could very easily broaden their horizons by allowing them to generate enormous amounts of unique attacks, thereby launching coordinated and targeted strikes on vast scales.

At our recent Skyhigh Sales Kick-Off event in Las Vegas, our CEO, Gee Rittenhouse, shared a harrowing example of how a fourth-grade student could technically now use ChatGPT for educational purposes to formulate a buffer overflow attack for a commonly used web browser.

Thinking bigger-picture for a second here: that means we’re not only trying to thwart the highly-skilled hackers who know their way around sophisticated exploits and vulnerabilities; we could potentially now also face a far bigger army of complete novices, youngsters, and basically any curious web user who can reach chat.openai.com

On the flipside, could ChatGPT be utilized to help organizations better secure their systems?

There’s no doubt that ChatGPT’s power and ability could be harnessed to generate huge amounts of data: facilitating system stress-tests, performing fuzzing tests against software developments, and even perhaps simulate malicious actors for incident response tabletop exercises.

Food for Thought

While ChatGPT can be used to strengthen our defenses, it can also be exploited by nasty actors to carry out attacks more easily and effectively, as the examples earlier show. It is therefore essential for security practitioners to stay vigilant and keep up to date on the latest developments in AI and how it can be used in the realm of cybersecurity.

This means not only being aware of the potential benefits of these tools, but also being prepared to defend against their potential misuse. As with any technology, it is crucial to understand the risks and take steps to mitigate them.

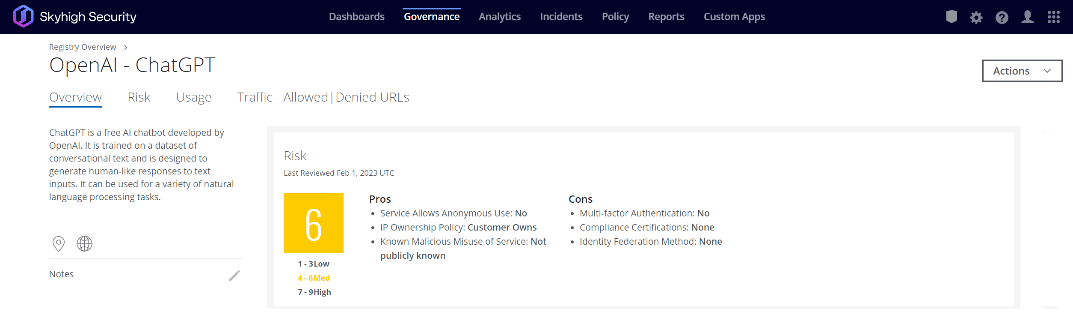

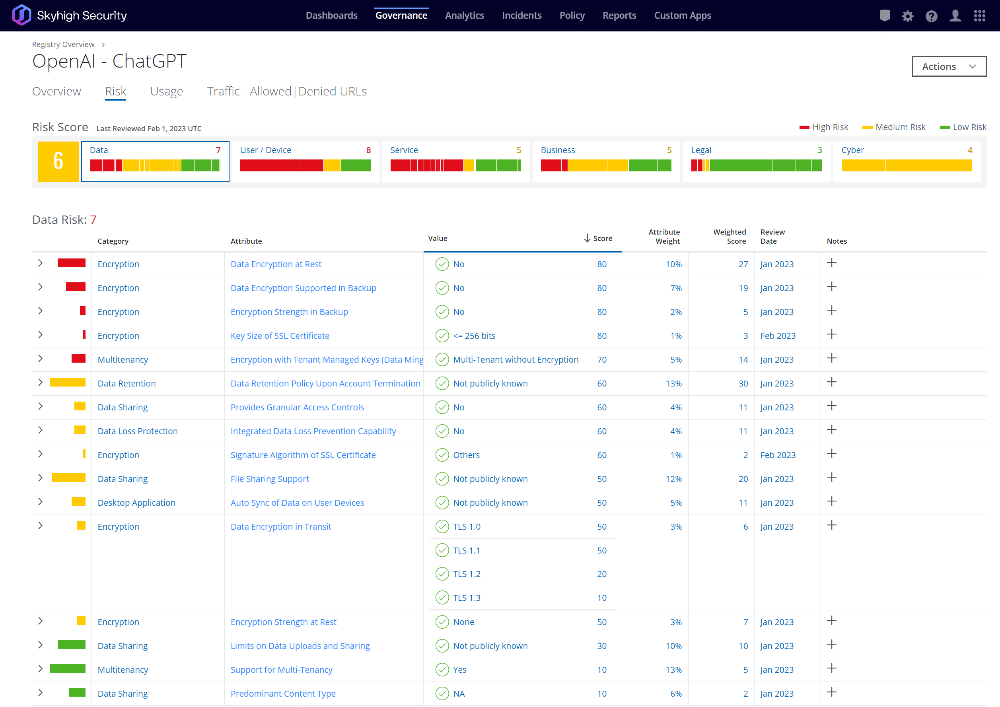

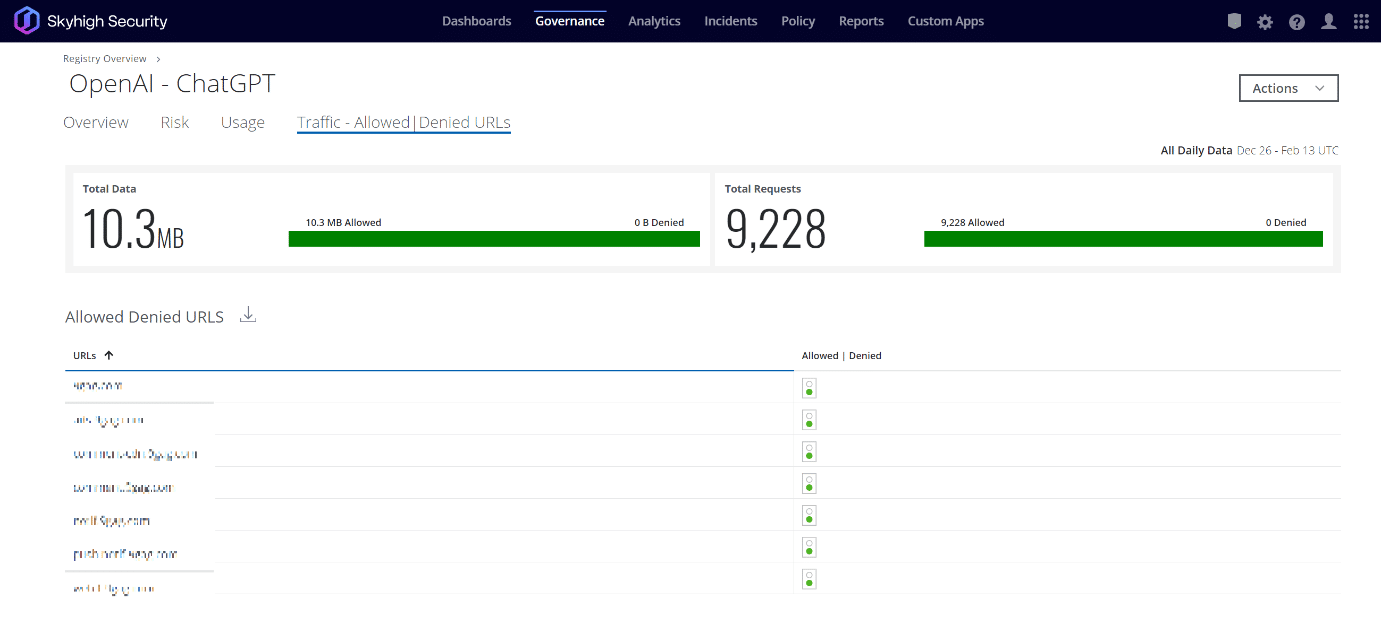

Skyhigh Security’s Cloud Registry offers a comprehensive risk-based lens into over 30,000 SaaS, PaaS, and IaaS services – with ChatGPT being just one of them.

Within this Cloud Registry, Skyhigh Security computes and assigns each cloud service a CloudTrust rating that indicates its enterprise-readiness, using a weighted average of 55 Risk Attributes developed with the Cloud Security Alliance (CSA). These attributes span Data, User/Device, Service, Business, Legal, and Cyber categories. It normalizes the result to a value between 1 and 9. Companies use the CloudTrust rating as a part of defining cloud governance policies.

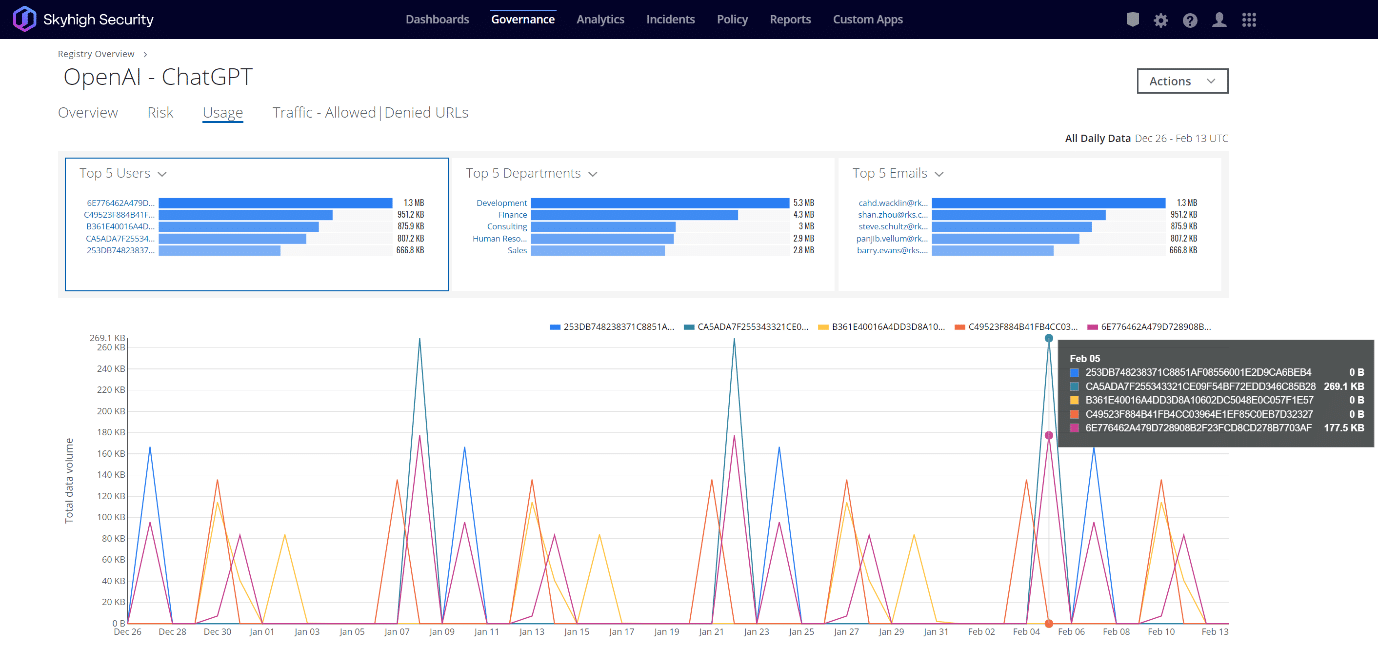

As a security practitioner, once you’ve identified the associated risks of a given service/entity, you’d ultimately want to be asking three questions:

- Have any of our users browsed to/used this? If so, how many? And who exactly?

- Has any part of our web and cloud infrastructure accessed this service?

- And if either of the first two questions result in a “yes”, how much data has been transacted between your organization and that service?

Once you know what you’re up against, you can take the appropriate actions to protect your devices, web, and cloud environments.

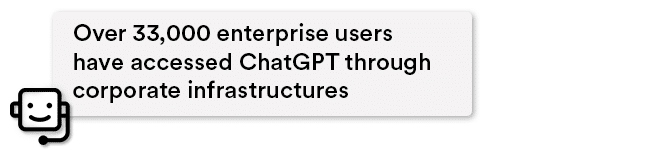

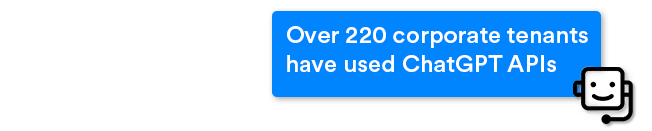

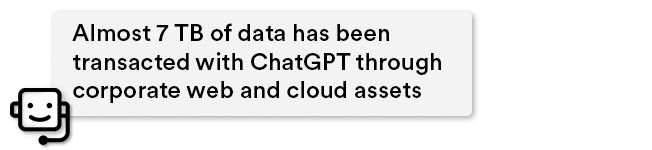

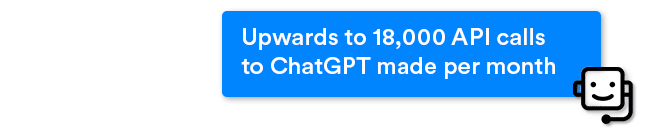

From analyzing Skyhigh Security’s globally telemetry, we see that between Nov 2022 – Feb 2023:

When you’re facing an advanced, leading-edge AI engine that’s freely available on the web, this is the kind of visibility, insight, and control you need across your entire environment.

Wrapping Up

ChatGPT is still in its infancy, and there are already plenty of moral issues to consider with its use.

It isn’t difficult to imagine a future in which advanced AI chatbots make our lives easier and our work better. Yet, it’s not impossible either that hackers may gain the upper hand on using ChatGPT more effectively for nefarious purposes.

Ultimately, though, in our struggles to tip the scales of cyber defense in our favor, if something like ChatGPT can aid the bad actors in any way, shape, or form, it is worth our attention and concern!

Check out Skyhigh Security’s protection solutions and request a demo to see for yourself.

Back to BlogsRelated Content

Securing the Fragmentation of the Modern Enterprise: The Data Hosting Conundrum

Trending Blogs

Skyhigh Security Renews IRAP Assessment at PROTECTED Level for 2026

Sarang Warudkar and Stuart Bayliss May 21, 2026

The Browser Security Gap Enterprises Can No Longer Ignore

Sarang Warudkar May 19, 2026

Securing the Fragmentation of the Modern Enterprise: The Data Hosting Conundrum

Ste Nadin May 14, 2026

Skyhigh Security Achieves SOC 2 Type II Compliance for the Complete SSE Cloud Platform

Sarang Warudkar and Stuart Bayliss April 30, 2026

Resilient Web Access Infrastructure: Business Imperative in a Cloud and Vibe-Code Obsessed World

Nick LeBrun April 23, 2026